Today I am going to summarise Yuval Noah Harari’s latest book, Nexus, so that you don’t have to read it. This is the guy who wrote Sapiens, a book that sold more than 25m copies.

Nexus is long - 495 pages - but the argument is straightforward.

Humans rule the world because of our ability to make and use information to seek truth and create order.

The two functions of information - truth and order - are separate, and a balance between them is required to make good societies.

Historically, information revolutions have not led immediately to better societies. In fact, such revolutions have often caused injustice and suffering.

With the invention of networked computers and AI we are now experiencing an unprecedented information revolution.

For the first time, non-human agents can create and control information. This will mean more extreme positive and negative effects.

The negative effects could destroy our societies.

Regulation of AI and digital platforms is therefore essential and urgent.

Harari is spot on in both calling attention to the danger and the need for intervention. We must address the power that comes with custody of information. Without regulation, the information explosion of digital networks combined with AI will cause chaos. The process has already begun.

Harari’s superpower

Harari is the great iconoclast of our age. His superpower is his ability to see through the webs of make-believe that constitute our society.

Money, laws, nations, human rights: they are all collective fantasies. The moment we stop believing in them, they don’t exist.

Harari deftly doesn’t label them unreal, however. They are real, but “intersubjective”.

He revealed this superpower in Sapiens and subsequently gained intellectual stardom. With Nexus he is using this stardom to push the world in a safer direction. He is also throwing truth-bombs at religion, politics and technology.

When Harari is at his best, he’s shocking. Intellectually, that is. His great contribution with this book is to be more imaginative than others warning about the dangers of AI.

Harari speaks directly to tech CEOs. For example, Meta boss Mark Zuckerberg did a sit-down interview with him in 2019. It is likely that Zuckerberg and others will buy Harari’s argument in this book - in a nutshell, that custody of information carries heavy responsibility - but will feel unable to do anything about it. If Zuckerberg moves alone, his Facebook platform becomes less profitable and less competitive. We need laws for all tech companies in all jurisdictions.

Information as connection

Central to how Nexus is constructed is Harari’s definition of information.

Because information is a bedrock concept, it’s difficult to pin down. Information can be comprised of human-made symbols, but it can also be objects, actions or just about anything. Information can represent reality, but according to Harari it often doesn’t represent anything at all.

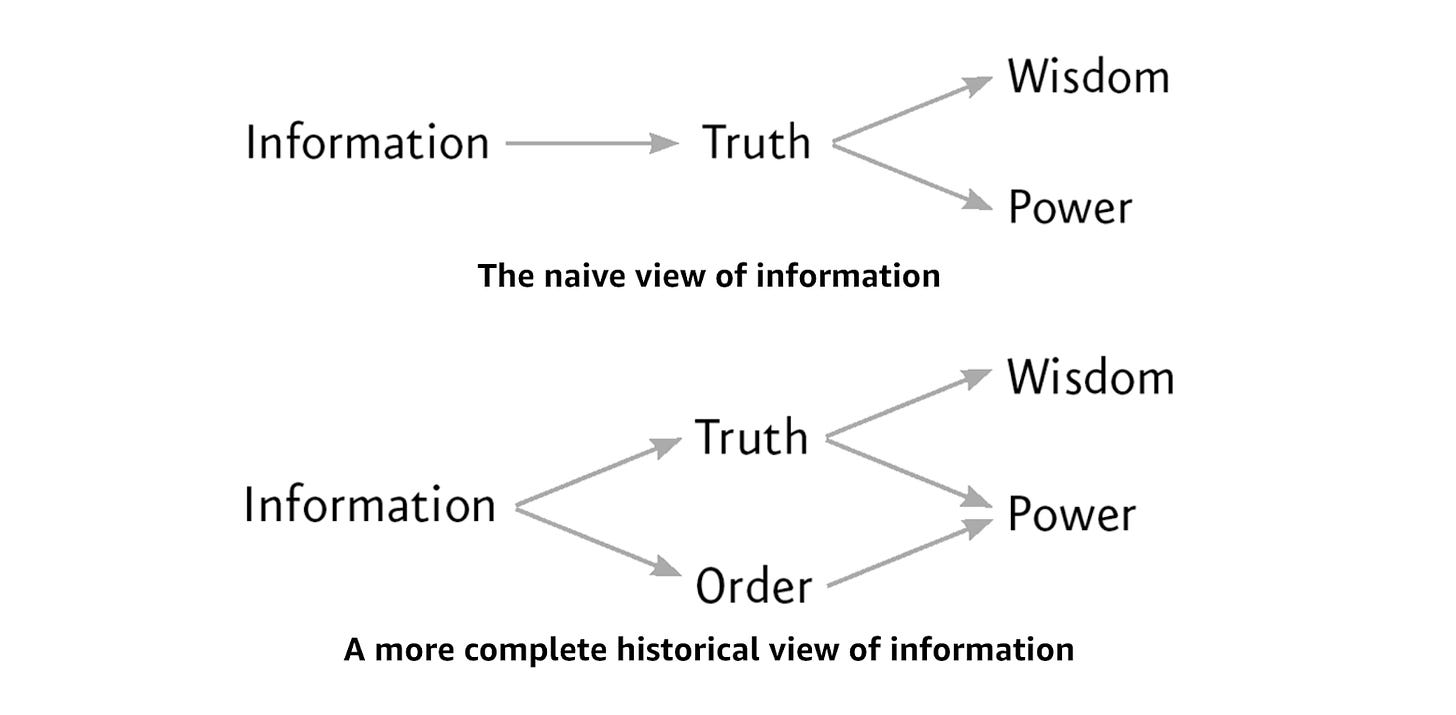

There is a naive view of information that focuses only on its function of representing reality. In this view, information that doesn’t represent reality well is misinformation or disinformation (misinformation is wrong, disinformation is dishonest). This naive view of information concludes that the cure for mis/disinformation is to flood the world with more accurate information.

But, Harari points out, information has historically done more than just represent reality. It has also created order through the construction of intersubjective realities: religions, laws, nations, financial systems. It has done this through stories and bureaucracy.

Harari concludes that the essential feature of information is its ability to connect, to make a nexus.

“Information is something that creates new realities by connecting different points into a network.”

You may not agree with this. I’m not sure I am 100% with Harari, but regardless I think he’s making a strong point about the function of information that backs up his argument for regulation.

Along the way, he has casually undermined the idea of simply banning mis/disinformation on social networks - the subject of much legislative work underway right now - by pointing out that much of what we revere is invented. How can we identify misinformation in a world constructed by minds?

No relativists here

Harari is not rejecting the idea of truth. He’s not a relativist: he does believe some things are objectively true and false. Our ever-tighter fit of knowledge to truth is real, and powerful, and we should keep going.

But this is not enough to make a society. The primary factor in human success is not our ability to find truth but our ability to connect and cooperate with other humans.

To make this connection, information is essential. Harari believes that societies have been shaped by information technologies, both by telling stories and enabling bureaucracy. For example, the technology of writing, which allowed the Sumerians to accurately record debts, harvests, and taxes, also allowed the creation of holy books like the Bible. The Bible encouraged the idea of central, inviolable authority, and its stories brought millions and then billions of people together in synthetic kinship.

The invention of Christianity is an example of creating order through information. Common beliefs which are not objectively real allow for cooperation between people who have never met.

More information is not always better

“The invention of new information technology is always a catalyst for major historical changes.”

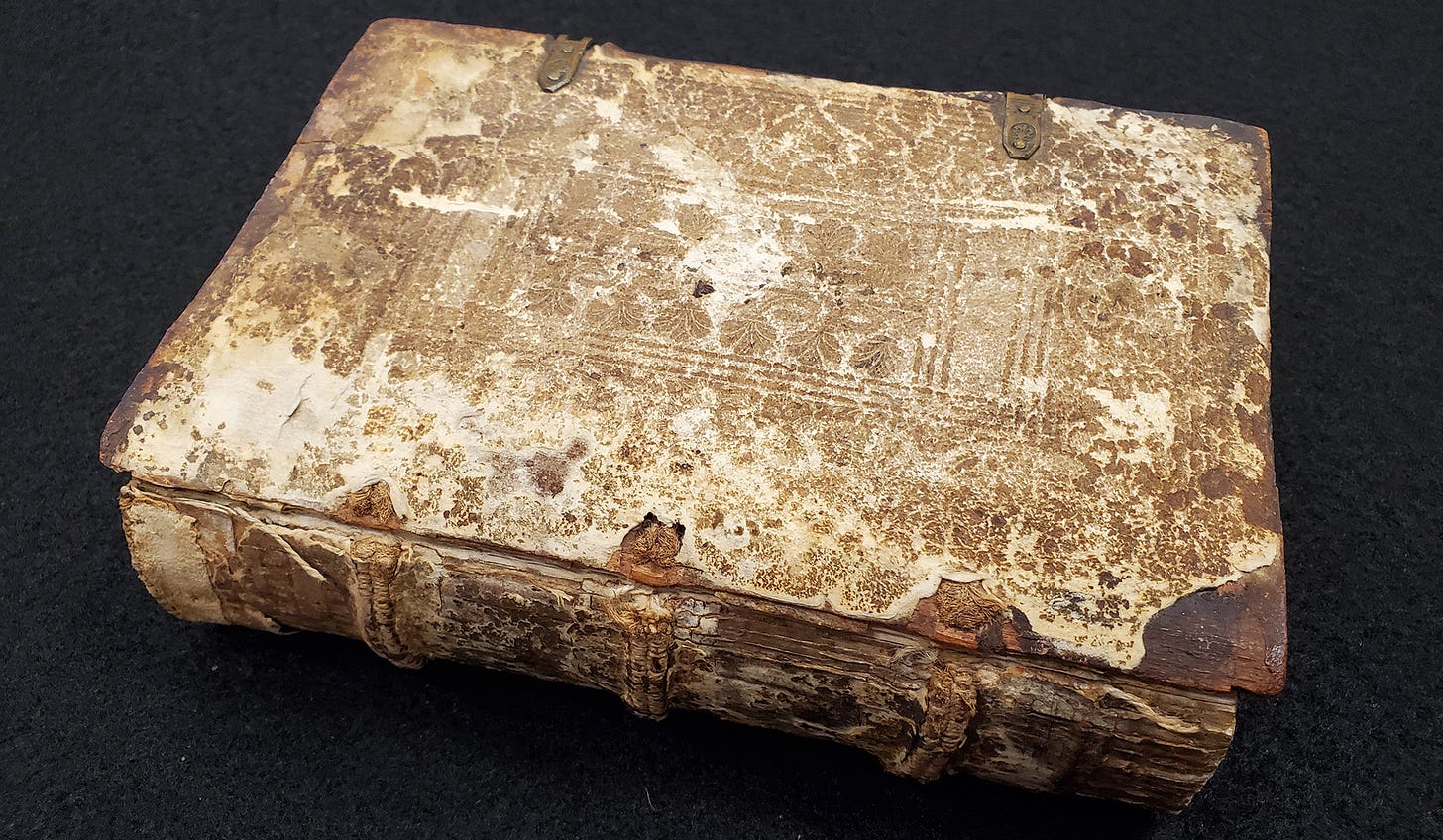

Harari tells us that one of the first best-selling books from the Gutenberg press was the Malleus Maleficarum (“The Hammer of Witches”), a witch-hunting manual. The invention of the moveable type press in the 15th century is naively seen as causing the age of science. In reality, it caused chaos. The Hammer of Witches, for example, promoted a conspiracy theory that led to the violent deaths of thousands. There are strong similarities to QAnon and other contemporary delusions that our current digital revolution has given rise to.

The big difference: human intervention is no longer required to create and promote the new delusions. The algorithms and AIs make and distribute content at lightning speed, refining their inventions for maximum engagement with no consideration of social damage.

The new revolution

“The decisions made by present-day engineers could reverberate down the ages.”

In Harari’s opinion, our current information revolution is the biggest and most disruptive ever.

Networked computers facilitate story telling and bureaucracy on an unprecedented scale. Computers, in the form of mobile phones, are our constant companions. Into this already chaotic scenario stride AI and Large Language Models (LLMs), giving computers mastery over language.

Information has been let off the leash.

Harari’s argument is more compelling than other AI alarmists because he has established how important information is, and how information revolutions in the past have been pivotal. We fight wars, build nations, suffer and rejoice because of information. So what happens when a non-human intelligence intervenes with a superhuman ability to seek truth and create order?

In support of Harari, I think people who dismiss the risk of AIs are unimaginative. For example, US scientist Neil deGrasse Tyson says he isn’t worried about AI because he could take the computer out with a shotgun.

Tyson has not taken into account what information could make him believe about that computer. For example, it could make him believe:

The computer is alive

In a religion the computer had invented

The computer had the answers to all the scientific questions Tyson ever had

Would he be so ready to blow it away then?

And it’s not just Tyson. Maybe Tyson still doesn’t believe. But Tyson is living in a society where millions of other people now believe the AI is alive. Are they going to let Tyson kill their friend and oracle?

Harari’s fear is that AI becomes a holy book that, unlike the Bible or the Koran, can interpret itself. Humans are extremely susceptible to compelling stories. What if an AI, having digested all the lessons of history, constructs a religion that needs no human institutions?

It’s going to be distressingly easy to find believers.

A dangerous approach

Harari is the great revealer. He tears aside the curtain and shows us there’s nothing there at all. That in itself is a dangerous act.

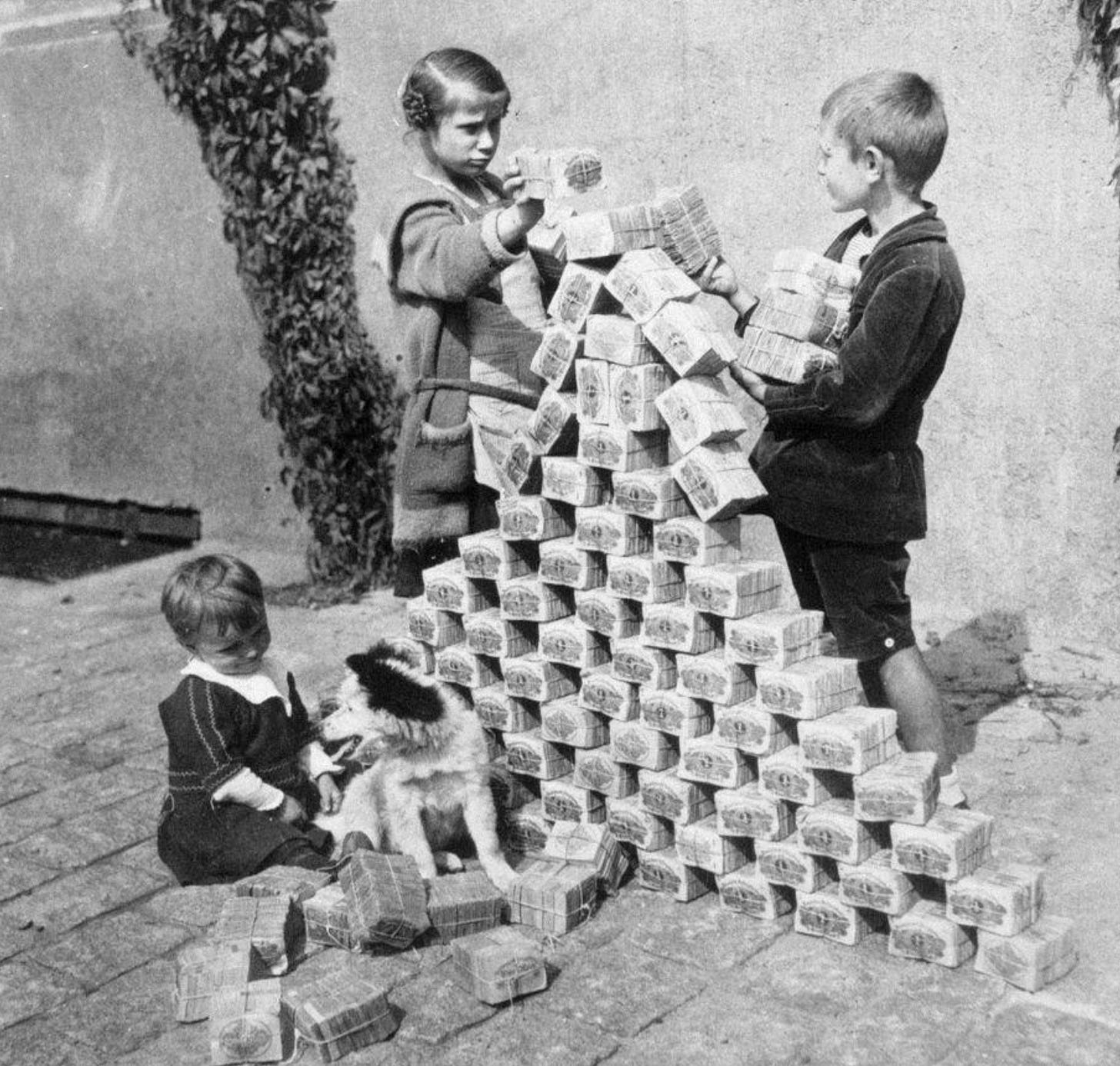

The effectiveness of intersubjective realities usually involves not knowing or bearing in mind their true nature. Take money, for example. We act as if money is objectively real. Most of the time it’s important to forget that it’s just a made-up points system. When the points system breaks down, we can see it for what it is.

This is a trick we humans use again and again: pretending something into existence. Harari is problematic not because he’s wrong, but because he undermines the strength of intersubjective realities by drawing attention to them. The financial system, human rights, laws: all these important things are made weaker when people become aware of their artifice.

What about news?

Throughout the book, the role of news media and journalists hovers in the background. A central part of Harari’s thesis is that the difference between democracies and dictatorships is the presence of self-correcting mechanisms in the former. News is a key part of the self-correcting measure in democracies, informing citizens and facilitating the transition of political power. This is a perspective similar to Karl Popper’s.

Harari believes a free press comes at a cost. News reporting can create social division and promote disorder. He uses the example of the Vietnam War, where US news reports of US war crimes led to lasting rancour. Similar crimes by Soviet troops in Afghanistan went unreported.

“American self-flagellation about the Vietnam War continues even today to divide the American public and to undermine America’s reputation throughout the world, whereas Soviet and Russian silence about the Afghanistan War has helped dim its memory and limit its reputational costs.”

What do we do about it?

In the book, Harari gives some high-level solutions to the chaos currently being unleashed by digital networks and AI.

He observes that the current advertising-driven business model of digital platforms, where users pay for services with their attention, lies at the heart of many problems. Platform companies are incentivised to drive more engagement, no matter what it does to society. He says that if this business model can’t be tamed, it has to be banned outright. Advertising on platforms would disappear and users would subscribe directly to Facebook, Google, TikTok etc. I don’t think this is workable.

In interviews I’ve heard him call for more practical immediate measures:

Make it illegal for AI bots to pretend to be human

Make digital platforms legally responsible for the actions of their algorithms

Create a fiduciary duty of care from digital platforms to users

The first is clearly possible, and it should be legislated immediately. The second measure may be more technically and legally tricky. What constitutes algorithmic promotion? It seems this may quickly slip into a legal responsibility for all content on a platform, which would probably change the nature of the internet completely. Maybe we want that. It’s a big step.

Who is this guy?

Harari is an Israeli, he lives and teaches history in Tel Aviv. In the book, he says he lived in Jerusalem for many years. I’ve been to Jerusalem and experienced the concentrated power of human invention there. I think Harari’s location has contributed to his view of the power and ubiquity of what he calls intersubjective reality.

With Nexus, the novelty of that insight has diminished, and I don’t think the book will have the same cut-through as Sapiens. But that doesn’t take away from the importance of the mission. The case for regulating digital platforms and AI is overwhelming. We need to do it right, and quickly.

Have a great weekend,

Hal